GPU support), in the above selector, choose OS: Linux, Package: Pip, Language: Python and Compute Platform: CPU. To install PyTorch via pip, and do not have a CUDA-capable or ROCm-capable system or do not require CUDA/ROCm (i.e. PyTorch via Anaconda is not supported on ROCm currently. Then, run the command that is presented to you. Often, the latest CUDA version is better. To install PyTorch via Anaconda, and you do have a CUDA-capable system, in the above selector, choose OS: Linux, Package: Conda and the CUDA version suited to your machine. GPU support), in the above selector, choose OS: Linux, Package: Conda, Language: Python and Compute Platform: CPU. To install PyTorch via Anaconda, and do not have a CUDA-capable or ROCm-capable system or do not require CUDA/ROCm (i.e. Tip: If you want to use just the command pip, instead of pip3, you can symlink pip to the pip3 binary. If you decide to use APT, you can run the following command to install it: However, if you want to install another version, there are multiple ways: If you want to use just the command python, instead of python3, you can symlink python to the python3 binary. Tip: By default, you will have to use the command python3 to run Python. Python 3.8 or greater is generally installed by default on any of our supported Linux distributions, which meets our recommendation. The specific examples shown were run on an Ubuntu 18.04 machine. An example difference is that your distribution may support yum instead of apt.

The install instructions here will generally apply to all supported Linux distributions. PyTorch is supported on Linux distributions that use glibc >= v2.17, which include the following: Prerequisites Supported Linux Distributions It is recommended, but not required, that your Linux system has an NVIDIA or AMD GPU in order to harness the full power of PyTorch’s CUDA support or ROCm support. Depending on your system and compute requirements, your experience with PyTorch on Linux may vary in terms of processing time. Your comments might help others.PyTorch can be installed and used on various Linux distributions. I have tried my best to layout step-by-step instructions, In case I miss any or you have any issues installing, please comment below. This completes PySpark install in Anaconda, validating PySpark, and running in Jupyter notebook & Spyder IDE. Spark = ('').getOrCreate()ĭf = spark.createDataFrame(data).toDF(*columns) Post install, write the below program and run it by pressing F5 or by selecting a run button from the menu. If you don’t have Spyder on Anaconda, just install it by selecting Install option from navigator. You might get a warning for second command “ WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform” warning, ignore that for now. Run the below commands to make sure the PySpark is working in Jupyter. If you get pyspark error in jupyter then then run the following commands in the notebook cell to find the PySpark.

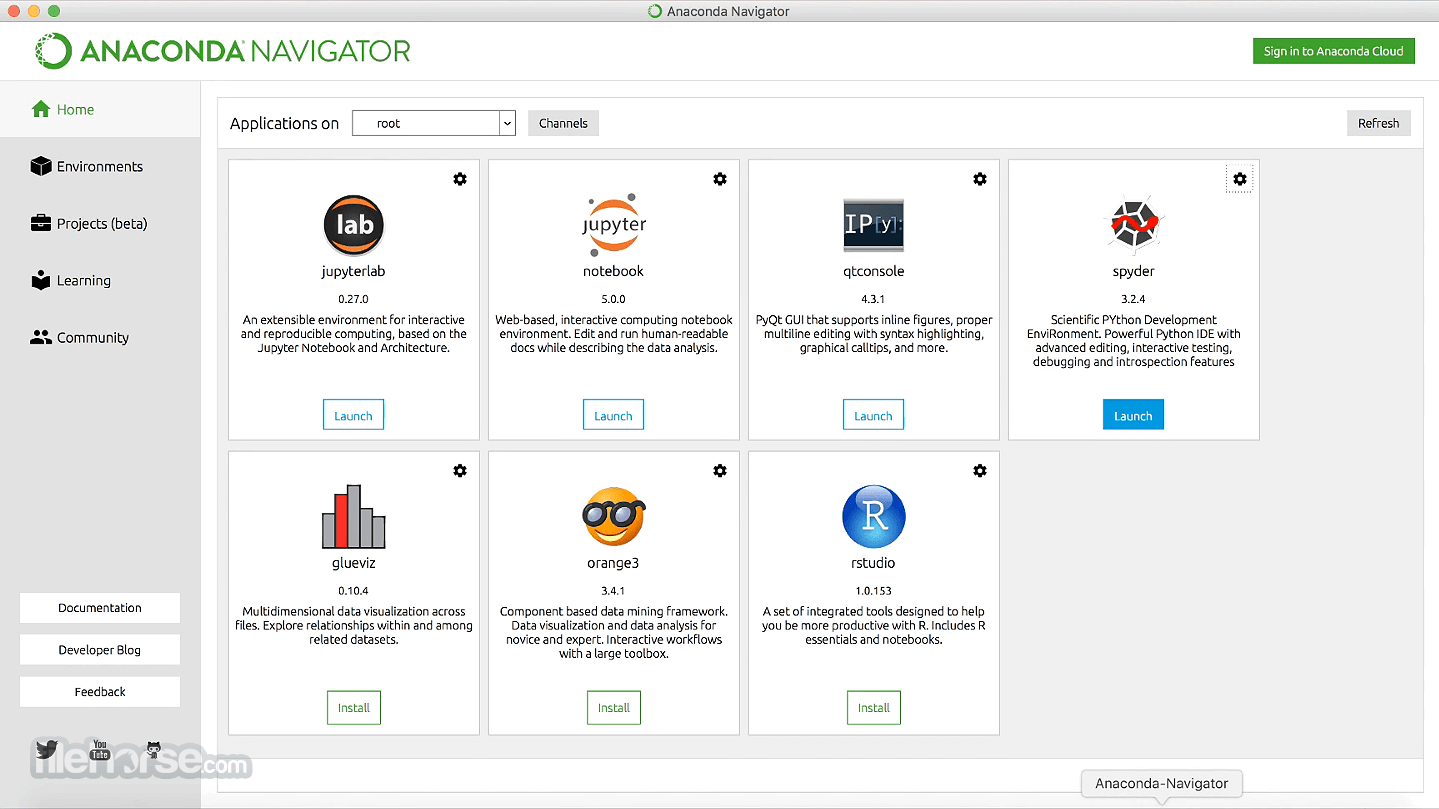

On Jupyter, each cell is a statement, so you can run each cell independently when there are no dependencies on previous cells. Now select New -> PythonX and enter the below lines and select Run. This opens up Jupyter notebook in the default browser. Post-install, Open Jupyter by selecting Launch button. If you don’t have Jupyter notebook installed on Anaconda, just install it by selecting Install option. Anaconda Navigator is a UI application where you can control the Anaconda packages, environment e.t.c. and for Mac, you can find it from Finder => Applications or from Launchpad. Now open Anaconda Navigator – For windows use the start or by typing Anaconda in search. With the last step, PySpark install is completed in Anaconda and validated the installation by launching PySpark shell and running the sample program now, let’s see how to run a similar PySpark example in Jupyter notebook. Now access from your favorite web browser to access Spark Web UI to monitor your jobs. For more examples on PySpark refer to PySpark Tutorial with Examples. Note that SparkSession 'spark' and SparkContext 'sc' is by default available in PySpark shell.ĭata = Enter the following commands in the PySpark shell in the same order. Let’s create a PySpark DataFrame with some sample data to validate the installation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed